Make a donation to the Museum

Combatting Terrorist Content: The Social Media Challenge

Combatting Terrorist Content: The Social Media Challenge

On Tuesday, November 5, the 9/11 Memorial & Museum will host a public program “Combating Terrorist Content: The Social Media Challenge,” which will feature speakers from Facebook and the Harvard Kennedy School, starting at 7 p.m.

The media spotlight is set squarely on Facebook following the recent House Financial Services Committee hearing which saw Mark Zuckerberg, cofounder and CEO of Facebook, testify to Congressional leaders about the cryptocurrency project Libra. During a speech at Georgetown University in October, Zuckerberg explained that Facebook’s approach to content, which favors letting people say whatever they want, is part of an American tradition of free speech in the marketplace of ideas. However, is “freedom of speech” the same as “freedom of reach?”

Earlier this year, social media platforms came under scrutiny for the amplification of extremist content and the live streaming of the Christchurch, New Zealand terror attack. Given that there is no internationally accepted definition of terrorism, how do social media platforms around the world define, restrict, and remove terrorist content without infringing on peoples’ freedom of speech? Additionally, how do these platforms go about moderating this content in a timely manner? For instance, more than 500 hours of video are uploaded to YouTube every single minute, which equates to approximately 1,250 days’ worth of newly uploaded content per hour.

Following the Christchurch attack, French President Emmanuel Macron and New Zealand Prime Minister Jacinda Ardern led a group of world leaders, tech companies, and organizations to adopt a pledge that seeks to eliminate terrorist and violent extremist content online to stop the internet being used as a tool for terrorists.

The Global Internet Forum to Counter Terrorism (GIFCT) has taken steps to implement this Christchurch Call to Action. GIFCT was formally established in July 2017 as a group of companies dedicated to disrupting terrorist abuse of members’ digital platforms. The original forum was led by a rotating chair drawn from the founding four companies—Facebook, Microsoft, Twitter, and YouTube—and managed a program of knowledge-sharing, technical collaboration, and shared research.

As the government continues to delegate online countering-extremism responsibilities to private industry, it begs the question: whose responsibility is it to fight terrorism online?

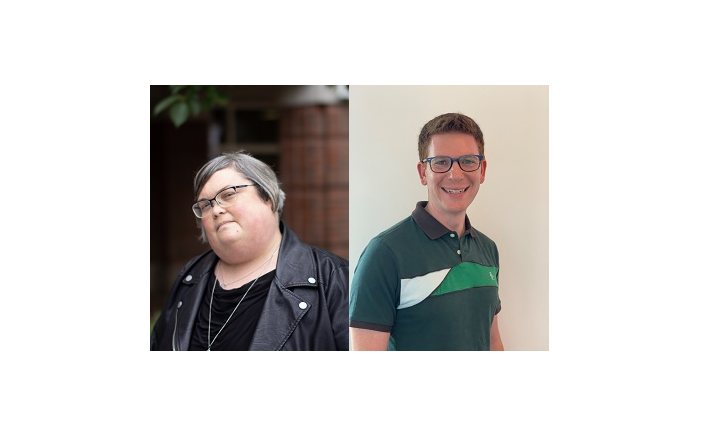

The 9/11 Memorial Museum is delighted to welcome David Tessler and Dr. Joan Donovan, experts in this field, to share their work and discuss these pressing questions.

David Tessler is a public policy manager on the Dangerous Organization team at Facebook. He joined the company in May 2019, after almost 20 years of public service in the U.S. Federal Government. Most recently, David was at the State Department where he held a variety of positions, including acting sanctions coordinator and deputy director of policy planning. David also worked for seven years at the Treasury Department, where he focused on sanctions policy and counter-terrorist financing.

Dr. Joan Donovan is director of the Technology and Social Change Research Project at the Shorenstein Center on Media, Politics, and Public Policy at Harvard Kennedy School of Government. Her work focuses on media manipulation tactics and techniques, effects of disinformation campaigns, and adversarial media movements that target journalists.

Reserve tickets to this program, and learn more about the fall 2019 public program season at the 9/11 Memorial Museum.

By 9/11 Memorial Staff

Previous Post

Reflections on Memorial Planning

The 9/11 Memorial & Museum's Anthony Gardner wrote a blog post for the onePULSE Foundation to comment on the challenge of memorialization and to reflect on his experience participating in the planning process for the eventual 9/11 Memorial.

Next Post

Recap: “ISIS without the Caliphate: What Happens Now?”

Graeme Wood, author of The Way of the Strangers: Encounters with the Islamic State, and Devorah Margolin of George Washington University’s Program on Extremism took part in a public program at the 9/11 Memorial Museum on Tuesday, Oct. 29, about the future of ISIS.